There’s no denying that Generative Artificial Intelligence (GenAI) has been one of the most significant technological developments in recent memory, promising unparalleled advancements and enabling humanity to accomplish more than ever before. By harnessing the power of AI to learn and adapt, GenAI has fundamentally changed how we interact with technology and each other, opening new avenues for innovation, efficiency, and creativity, and revolutionizing nearly every industry, including cybersecurity. As we continue to explore its potential, GenAI promises to rewrite the future in ways we are only beginning to imagine.

Good Vs. Evil

Fundamentally, GenAI in and of itself has no ulterior motives. Put simply, it’s neither good nor evil. The same technology that allows someone who has lost their voice to speak also allows cybercriminals to reshape the threat landscape. We have seen bad actors leverage GenAI in myriad ways, from writing more effective phishing emails or texts, to creating malicious websites or code to generating deepfakes to scam victims or spread misinformation. These malicious activities have the potential to cause significant damage to an unprepared world.

In the past, cybercriminal activity was restricted by some constraints such as ‘limited knowledge’ or ‘limited manpower’. This is evident in the previously time-consuming art of crafting phishing emails or texts. A bad actor was typically limited to languages they could speak or write, and if they were targeting victims outside of their native language, the messages were often filled with poor grammar and typos. Perpetrators could leverage free or cheap translation services, but even those were unable to fully and accurately translate syntax. Consequently, a phishing email written in language X but translated to language Y typically resulted in an awkward-sounding email or message that most people would ignore as it would be clear that “it doesn’t look legit”.

With the introduction of GenAI, many of these constraints have been eliminated. Modern Large Language Models (LLMs) can write entire emails in less than 5 seconds, using any language of your choice and mimicking any writing style. These models do so by accurately translating not just words, but also syntax between different languages, resulting in crystal-clear messages free of typos and just as convincing as any legitimate email. Attackers no longer need to know even the basics of another language; they can trust that GenAI is doing a reliable job.

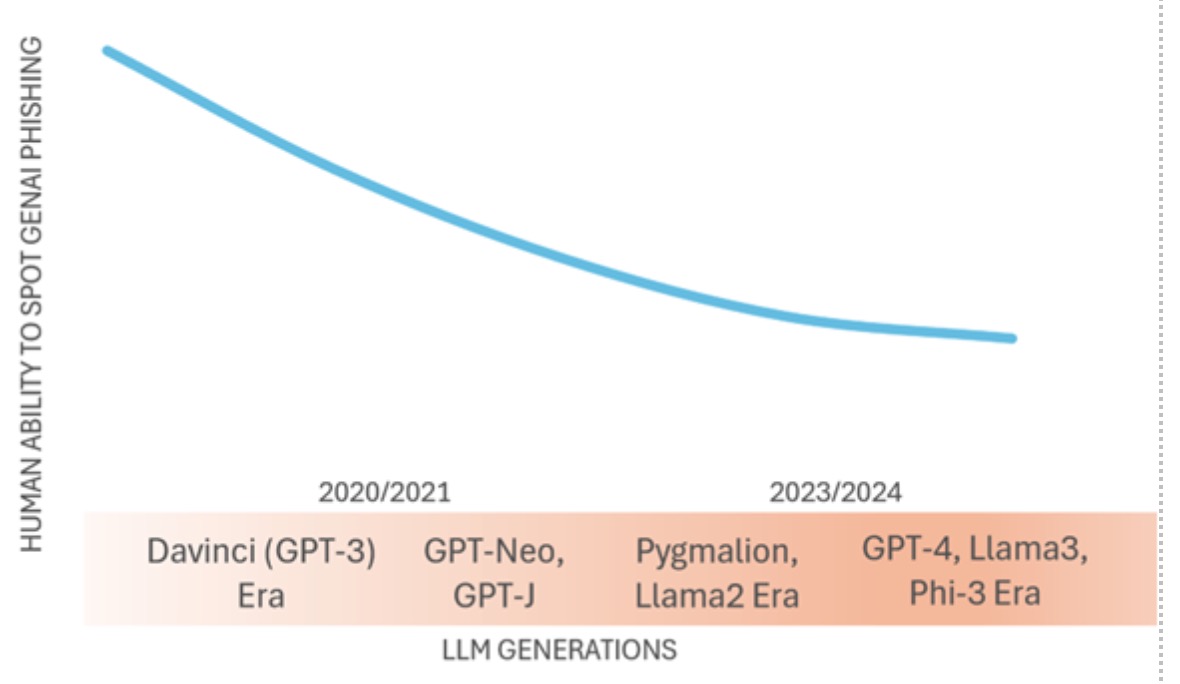

McAfee Labs tracks these trends and periodically runs tests to validate our observations. It has been noted that earlier generations of LLMs (those released in the 2020 era) were able to produce phishing emails that could compromise 2 out of 10 victims. However, the results of a recent test revealed that newer generations of LLMs (2023/2024 era) are capable of creating phishing emails that are much more convincing and harder to spot by humans. As a result, they have the potential to compromise up to 49% more victims than a traditional human-written phishing email1. Based on this, we observe that humans’ ability to spot phishing emails/texts is decreasing over time as newer LLM generations are released:

Figure 1: how human ability to spot phishing diminishes as newer LLM generations are released

This creates an inevitable shift, where bad actors are able to increase the effectiveness and ROI of their attacks while victims find it harder and harder to identify them.

Bad actors are also using GenAI to assist in malware creation, and while GenAI can’t (as of today) create malware code that fully evades detection, it’s undeniable that it is significantly aiding cybercriminals by accelerating the time-to-market for malware authoring and delivery. What’s more, malware creation that was historically the domain of sophisticated actors is now becoming more and more accessible to novice bad actors as GenAI compensates for lack of skill by helping develop snippets of code for malicious purposes. Ultimately, this creates a more dangerous overall landscape, where all bad actors are leveled up thanks to GenAI.

Fighting Back

Since the clues we used to rely on are no longer there, more subtle and less obvious methods are required to detect dangerous GenAI content. Context is still king and that’s what users should pay attention to. Next time you receive an unexpected email or text, ask yourself: am I actually subscribed to this service? Is the alleged purchase date in alignment with what my credit card charges? Does this company usually communicate this way, or at all? Did I originate this request? Is it too good to be true? If you can’t find good answers, then chances are you are dealing with a scam.

The good news is that defenders have also created AI to fight AI. McAfee’s Text Scam Protection uses AI to dig deeper into the underlying intent of text messages to stop scams, and AI specialized in flagging GenAI content, such as McAfee’s Deepfake Detector, can help users browse digital content with more confidence. Being vigilant and fighting malicious uses of AI with AI will allow us to safely navigate this exciting new digital world and confidently take advantage of all the opportunities it offers.

The post The Dark Side of Gen AI appeared first on McAfee Blog.